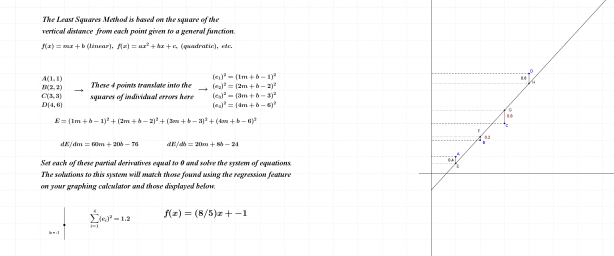

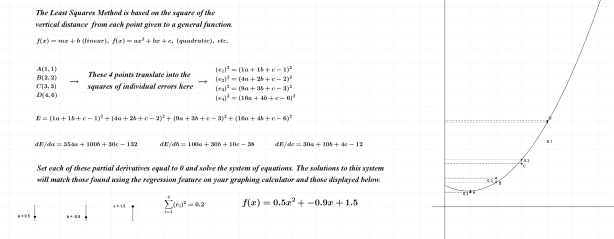

The Least Squares Method is based upon the square of the vertical distance (error) from each point given to a general function; this function can be linear, quadratic, cubic, etc. in nature.

Squaring the errors mentioned above alleviates the “+/-” issue that arises from the fact that some points are above and others below the desired function. The sum of these “errors^2” (E) must then be differentiated with respect to each of the parameters found in the general function being used.

There will be two partial derivatives for linear functions, three for quadratic functions, and so. Each partial derivative is then set equal to zero, since our objective is to minimize the error. For linear regression, the result will produce two equations, each in two variables; quadratic regression yields three equations in three variables, etc., etc., etc…… These equations must be solved in such a way as to find values of all parameters that meet, simultaneously, the objectives set forth (minimizing the error).

Students will recognize very quickly from their work in Math 20-1 the systems of equations in two variables that emerge from the linear regression shown below. By exploiting matrix operations on their graphing calculators, students can make quick work of solving simultaneous equations in three (or more) variables. This would be a perfect spot from which to launch the study of linear combinations of vectors; a completely different perspective on minimizing errors awaits here.

Partial Derivatives: When differentiating with respect to a particular parameter, all other parameters are considered as constants.

Linear Regression

Click on the link provided to explore linear regression.

In the spirit of consistency, I’ve included a quadratic regression below for comparison to the previous linear counterpart.

Quadratic Regression

Click on the link provided to explore quadratic regression.

As mentioned earlier, the subject of this entry provides a very nice point from which to commence the study of linear combinations; that perspective will eventually be linked here.

Thanks for reading.